Keynotes

Charles Elkan – University of California and Amazon

Title: From Practice to Theory in Learning from Massive Data

Charles Elkan is a professor of computer science at the University of California, San Diego, currently on leave as Amazon Fellow and leader of machine learning for Amazon in Seattle and Silicon Valley. In the past, he has been a visiting associate professor at Harvard. His published research has been mainly in machine learning, data science, and computational biology; the MEME algorithm that he developed with Ph.D. students has been used in over 3000 published research projects in biology and computer science. He is fortunate to have had inspiring undergraduate and graduate students who are in leadership positions now such as vice president at Google.

Abstract: This talk will discuss examples of how Amazon applies machine learning to large-scale data, and open research questions inspired by these applications. One important question is how to distinguish between users that can be influenced, versus those who are merely likely to respond. Another question is how to measure and maximize the long-term benefit of movie and other recommendations. A third question, is how to share data while provably protecting the privacy of users. Note: Information in the talk is already public, and opinions expressed will be strictly personal.

Joseph Bradley – Apache Spark PMC

Title: Foundations for Scaling ML in Apache Spark

Joseph Bradley is a Software Engineer and Apache Spark PMC member working on machine learning and graph processing at Databricks. Previously, he was a postdoc at UC Berkeley after receiving his Ph.D. in Machine Learning from Carnegie Mellon U. in 2013. His research included probabilistic graphical models, parallel sparse regression, and aggregation mechanisms for peer grading in MOOCs.

Abstract: Apache Spark has become the most active open source Big Data project, and its Machine Learning library MLlib has seen rapid growth in usage. A critical aspect of MLlib and Spark is the ability to scale: the same code used on a laptop can scale to 100’s or 1000’s of machines. This talk will describe ongoing and future efforts to make MLlib even faster and more scalable by integrating with two key initiatives in Spark. The first is Catalyst, the query optimizer underlying DataFrames and Datasets. The second is Tungsten, the project for approaching bare-metal speeds in Spark via memory management, cache-awareness, and code generation. This talk will discuss the goals, the challenges, and the benefits for MLlib users and developers. More generally, we will reflect on the importance of integrating ML with the many other aspects of big data analysis.

About MLlib: MLlib is a general Machine Learning library providing many ML algorithms, feature transformers, and tools for model tuning and building workflows. The library benefits from integration with the rest of Apache Spark (SQL, streaming, Graph, core), which facilitates ETL, streaming, and deployment. It is used in both ad hoc analysis and production deployments throughout academia and industry.

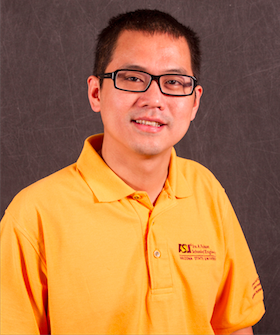

Hanghang Tong – Arizona State University

Title: Inside the Atoms: Mining a Network of Networks and Beyond.

Hanghang Tong is currently an assistant professor at School of Computing, Informatics, and Decision Systems Engineering (CIDSE), Arizona State University since August 2014. Before that, he was an assistant professor at Computer Science Department, City College, City University of New York, a research staff member at IBM T.J. Watson Research Center and a Post-doctoral fellow in Carnegie Mellon University. He received his M.Sc and Ph.D. degree from Carnegie Mellon University in 2008 and 2009, both majored in Machine Learning. His research interest is in large scale data mining for graphs and multimedia. He has received several awards, including one ‘test of time’ award (ICDM 10-Year highest impact paper award), four best paper awards and four ‘best of conference’. He has published over 100 referred articles and more than 20 patents.

Abstract: Networks (i.e., graphs) appears in many high-impact applications. Often these networks are collected from different sources, at different times, at different granularities. In this talk, I will present our recent work on mining such multiple networks. First, we will present two models - one on modeling a set of inter-connected networks (NoN); and the other on modeling a set of inter-connected co-evolving time series (NoT). For both models, we will show that by treating networks as context, we are able to model more complicate real-world applications. Second, we will present some algorithmic examples on how to do mining with such new models, including ranking, imputation and prediction. Finally, we will demonstrate the effectiveness of our new models and algorithms in some applications, including bioinformatics, and sensor networks.